Creative Testing (A 2026 Guide)

Insights

•

John Gargiulo

Running ads without testing your creatives is like guessing which product your customers want without ever asking them. You might get lucky once in a while, but you'll waste a lot of money in the process.

Creative testing gives you a system for learning what actually works. Instead of relying on gut instinct or copying what competitors are doing, you're making decisions based on real data from your own audience. This guide walks through how to set up a creative testing process that helps you find winners faster, understand why they work, and scale them without burning out your budget.

What is Creative Testing?

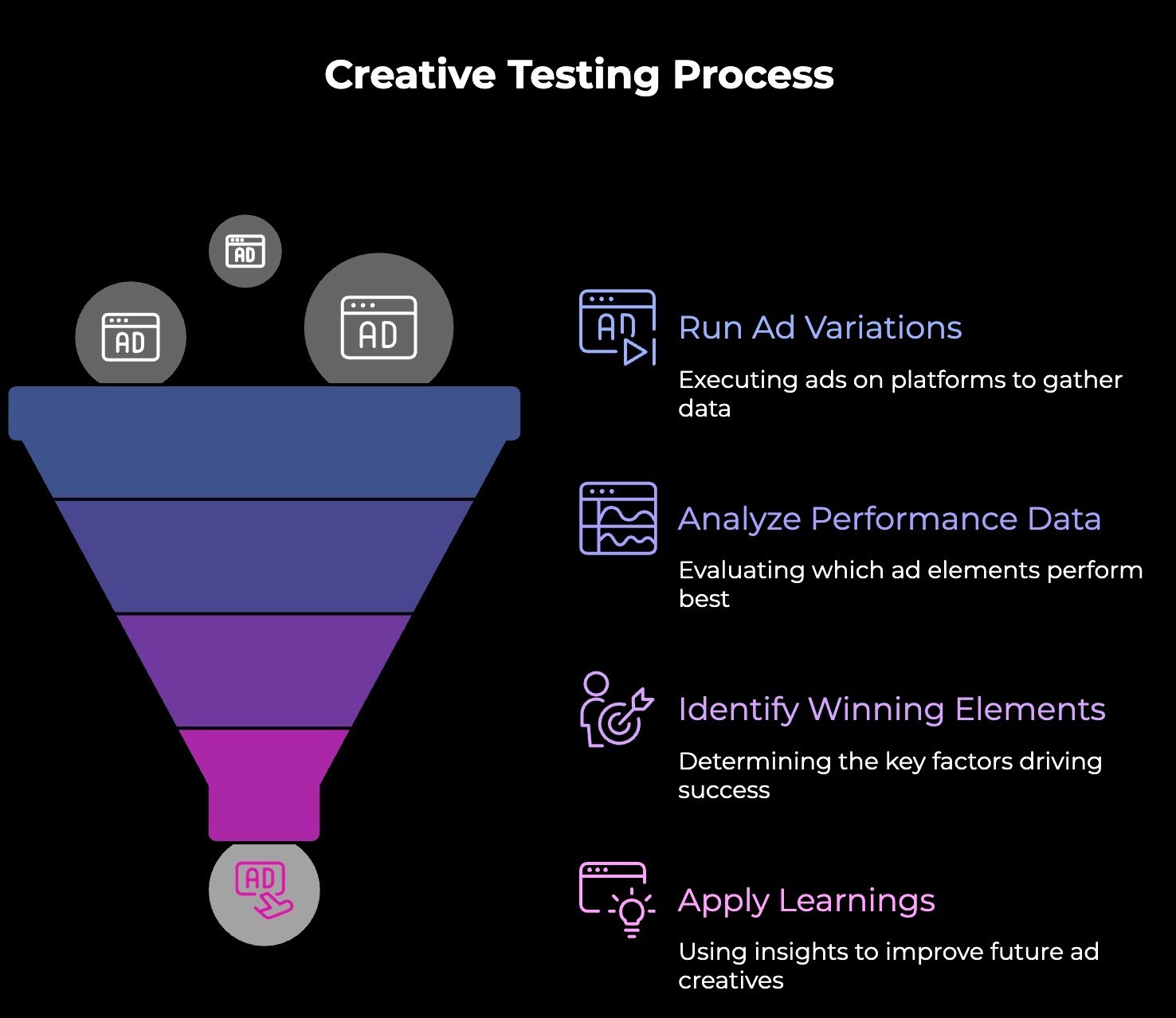

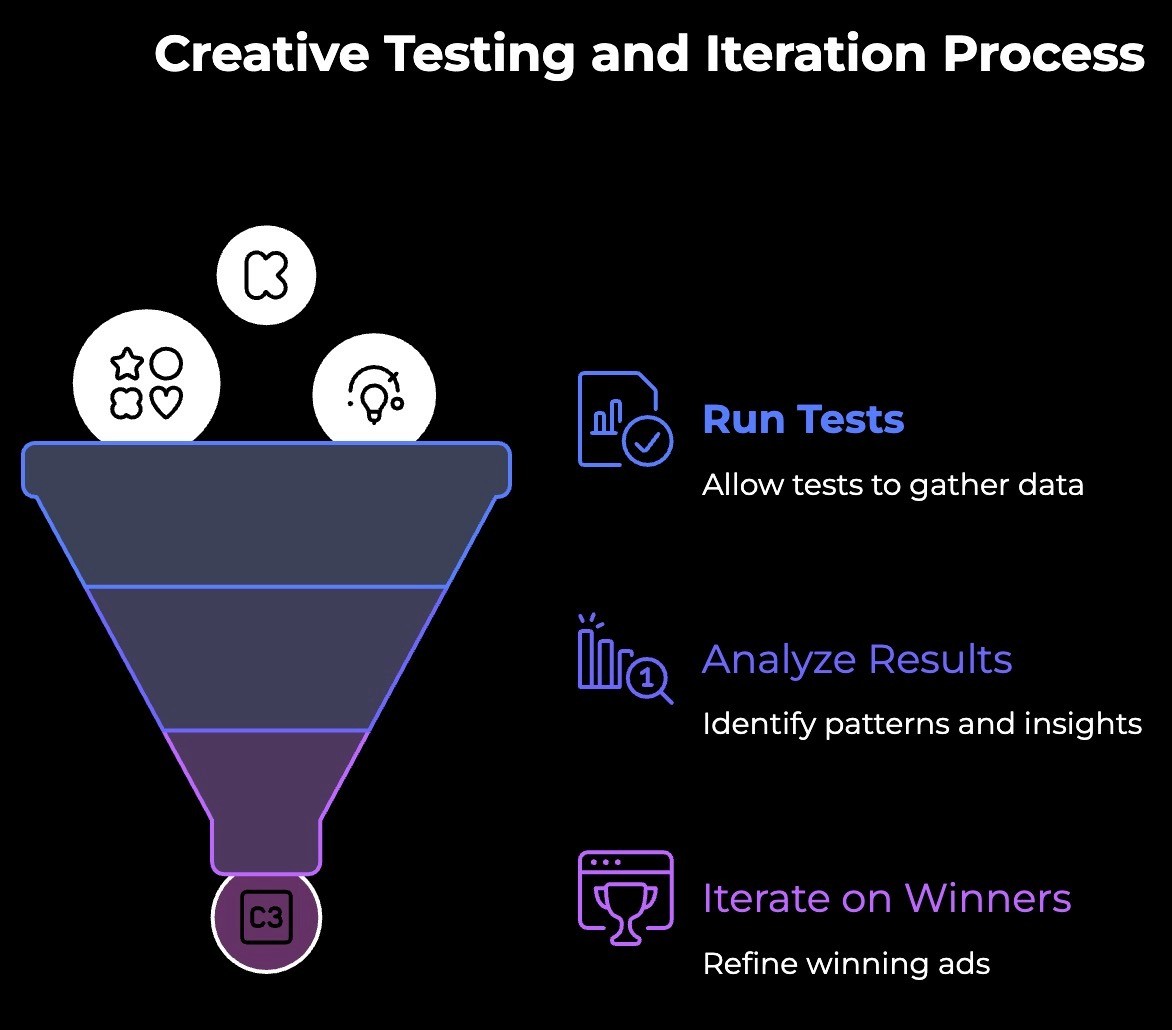

Creative testing is the process of running multiple ad variations to see which elements drive the best performance. Instead of launching one ad and hoping it works, you launch several versions with specific differences and let the data tell you what resonates.

The goal isn't just to find a winner.

It's to understand why something wins, so you can apply that learning to every ad you make going forward. Because platforms like Meta and TikTok reward relevance. Their algorithms push ads that engage users and pull back on ads that don't. No amount of budget or audience targeting can save a creative that doesn't connect.

Creative is also the biggest lever you can pull. You can optimize your bidding strategy, refine your audiences, and tweak your landing pages, but none of that will matter if people scroll right past your ad. Testing helps you figure out what makes them stop.

How to Structure Your Creative Tests

There are 5 steps of building creative tests in a way that helps you run better ads, and also compound your learning. Let's walk you through them.

Think in Concepts, Not Single Variables

The old playbook said to change one thing at a time: swap a headline, keep everything else identical, and measure the difference. That approach made sense when ad platforms distributed content based on audience targeting. It doesn't work the same way anymore.

Meta's Andromeda system uses visual and text embeddings to evaluate ads.

When two creatives look and sound too similar, the algorithm treats them as essentially the same ad and won't distribute them independently. Swapping three words in your hook while keeping identical visuals doesn't register as a new creative. You need to change both the copy and the visuals, especially in the first six seconds, for the platform to treat each variation as genuinely distinct.

This means testing needs to happen at the concept level.

A concept is the combination of one angle (the core message you're leading with) and one ICP (the specific audience you're speaking to). Within a concept, you can explore different executions. But the executions need to be visually and structurally different enough that the algorithm recognizes them as separate ads.

Two Types of Tests Worth Running

Concept-level testing is where the biggest learning happens. This means taking the same angle and ICP but delivering them through different creative approaches. You might test the same weight-loss message as:

A myth-busting tactic that confronts a common misconception with proof

A skeptic tactic that starts from honest doubt and resolves it with results

A transformation tactic that leads with a finished, verifiable outcome

Each of these uses a different psychological approach and requires different visuals, scripts, and structures. That's what gives the algorithm distinct signals to work with, and what gives you meaningful data about which type of persuasion resonates with your audience.

Execution-level testing works within a single concept to explore how delivery affects performance. You keep the same angle, ICP, and tactic, but change things like:

Creative format: Deliver the same message as a testimonial, a listicle, a product walkthrough, or a street interview

Visual style: Test UGC lo-fi against polished studio footage or animated visuals

Narration driver: Compare voiceover-led ads against text-driven or talking-to-camera versions

Tone and pacing: Try an empathetic, slow-paced version against an educational, fast-paced one

The key rule: always change the visuals alongside any copy changes, especially in the opener. If the first six seconds look the same to the algorithm's embeddings, your test won't get a clean distribution, no matter how different the script reads on paper.

Create Enough Variations to See Patterns

Running two versions of anything tells you which one is better, but not much about why. Three to five genuinely distinct variations within a concept start to reveal patterns.

For example, if you're testing a fitness app and your top performers both use a problem-focused tactic with UGC visuals while the bottom performers use aspiration-focused tactics with polished footage, you've learned something bigger than which ad won. You've learned that your audience responds to raw, relatable problem awareness over aspirational polish. That insight shapes every concept you build next.

Give Tests Enough Room to Run

Killing tests too early is one of the fastest ways to waste money on bad data. An ad that looks like a loser after 48 hours might stabilize once the algorithm finds its audience. An ad that looks great at 500 impressions might fall apart at 5,000.

Aim for at least 1,000 to 2,000 impressions per variation before concluding. For conversion-focused tests, wait for 20 to 30 conversions per variation. Set your test duration upfront, typically three to seven days, and resist the urge to make changes mid-flight.

When a Winner Emerges, Iterate

When an ad starts performing, the next move isn't to leave it alone and hope it keeps working. It's to iterate: protect what converts and refresh what decays.

Iteration means building on a validated winner to extend its lifespan. You might update visuals while keeping the same script, swap footage while preserving the voiceover, or recut the opener based on hook rate data. The goal is to keep the core concept alive while preventing fatigue.

Performance metrics guide every decision. Hook rate tells you whether the opener needs work. Post-hook retention shows if the middle is holding. Average view time signals whether the ad should be shorter or longer. Each iteration targets whichever metric is underperforming.

A winning ad isn't an endpoint. It's the starting point for a new family of variations.

What Metrics to Focus On

The right metric depends on your goal, but here are the most useful ones for creative testing:

Hook rate measures the percentage of people who watch past the first few seconds. This tells you if your opening is working. For TikTok ads, look at the two-second view rate. On Meta, check ThruPlay or video retention curves.

Click-through rate shows how compelling your overall message is. A high hook rate but low CTR means people are watching but not interested enough to act.

Cost per acquisition is the ultimate measure, but it requires more data to stabilize. Use CPA to evaluate your top performers once you've narrowed the field with faster metrics like hook rate and CTR.

Don't get distracted by vanity metrics like impressions or reach. They tell you how much you spent, not how well your creative performed.

Create Winning Ads Faster with Airpost

Creative testing only works if you can produce enough variations to actually learn something. Testing two or three ads a month won't get you there. You need volume, and you need it consistently, without spending weeks in production or blowing your budget on agency fees.

That's what Airpost is built for.

It is a hybrid creative platform that pairs AI-powered ad generation with expert creative strategists. You set up a dynamic Master Brief, and Airpost delivers 10 to 30+ new video ads every week based on it. When you update the brief, the ads change with it.

Here's how it works:

Expert creative strategists manage your account, refine your briefs, and monitor what's performing so your ads improve over time

Real footage plus AI, your assets combined with Airpost's library of over 300,000 clips and AI-generated footage from a team dedicated to your brand

Automatic iteration on winners, when an ad starts performing, Airpost generates new variations based on it to help you scale what works

Brand safety built in, dedicated strategists, and a Disclaimers feature ensure compliance is handled

Airpost is trusted by brands like DoorDash and backed by investors including Lux Capital, WPP, and Stripe.

Book a demo with an Airpost to see how we combine AI and creative strategy to help you test more, learn faster, and scale your winning ads.