Sora 2 promises something many marketers have been waiting for: AI video that can handle more than short clips or isolated moments. It aims to generate longer scenes, maintain visual consistency, and follow complex prompts with fewer breakdowns across time.

The real question is whether those strengths hold up once you move from demos to day-to-day marketing use.

That's what we'll look at in this post. We'll review Sora 2, specifically for marketers. We'll discuss what it’s good at, where it struggles, and how usable it actually is when the goal is to produce ads (whether that's video ads for a product, or your events, or your PR), or content video ads that can bring better results for marketers.

What is Sora 2?

Sora 2 is OpenAI’s second-generation text-to-video model designed to generate realistic video scenes from written prompts. Compared to earlier AI video tools, it focuses less on short, visually flashy clips and more on simulating the physical world in a believable way.

Motion, gravity, object interaction, and camera behavior are handled with more consistency, which makes scenes feel grounded. It can also generate synchronized audio, including dialogue and ambient sound, alongside visuals.

What Sora 2 Does Well for Marketers

Sora 2 works best when you treat it as a high-speed concept engine, not a full ad production system. Its real value shows up early in the creative process, when teams need to explore ideas, visualize direction, and make faster decisions.

Faster Concept-to-Visual Translation

Sora 2 significantly shortens the gap between an idea and something you can react to visually. Instead of debating concepts abstractly, marketers can generate clips that make tone, mood, and setting tangible within minutes.

This is especially useful when:

Testing multiple creative directions for the same hook

Exploring different “worlds” for a campaign before committing budget

Aligning stakeholders who struggle to visualize ideas from copy alone

What changes in practice is how decisions get made. Creative reviews move away from opinions and hypotheticals and toward concrete reactions. Instead of asking, “Do you think this could work?” teams are reacting to something they can actually see.

This also reduces wasted production effort. Weak ideas are exposed earlier, before time is spent refining scripts, building storyboards, or briefing external teams. Strong ideas surface faster because they don’t need as much explanation to earn buy-in. Sora 2 fits best in early-stage ideation for AI ads, where speed matters more than final polish.

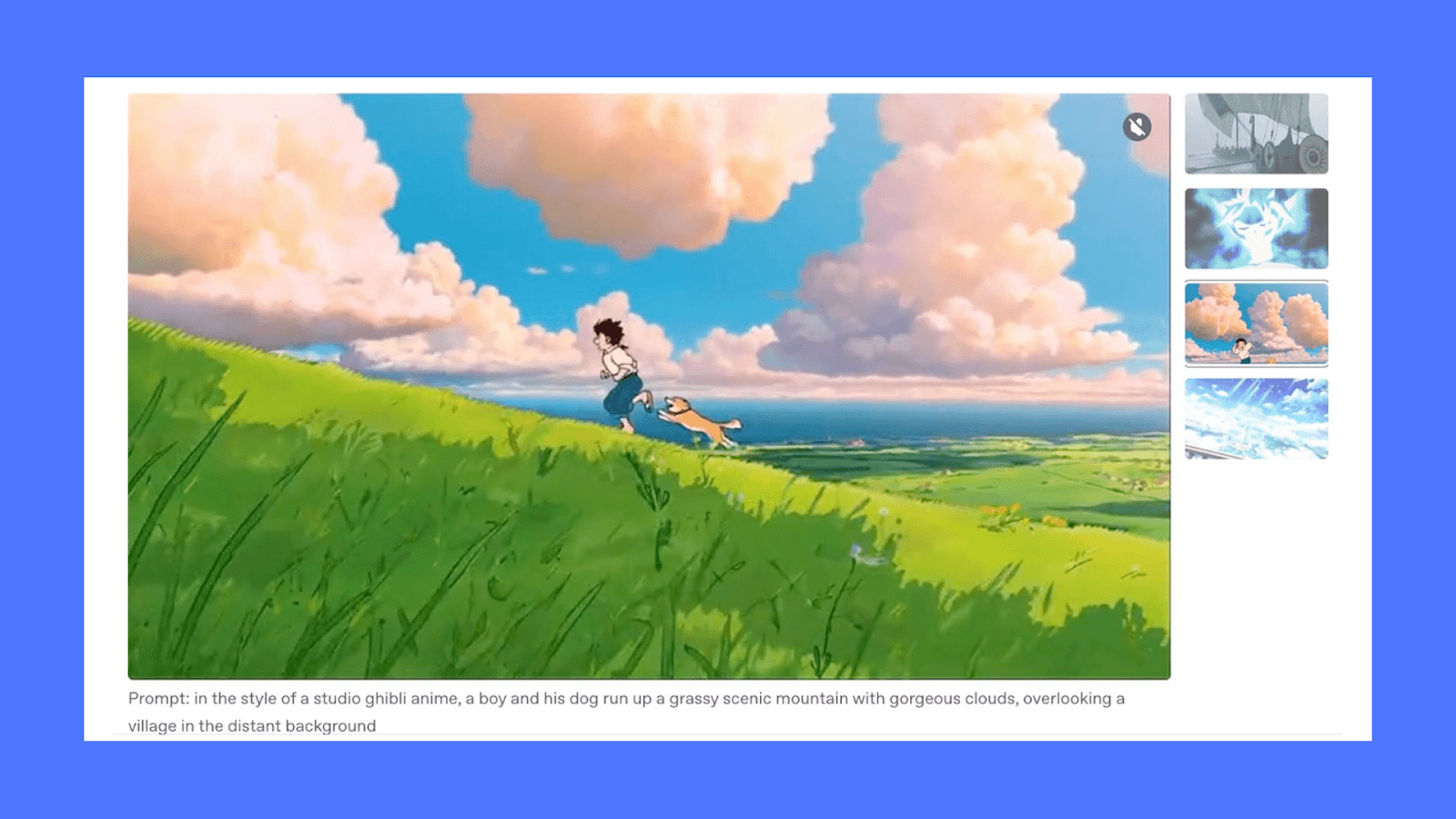

More Convincing Physical Motion Than Earlier Models

One of the quiet but important upgrades in Sora 2 is how it handles motion and physics. Actions like walking, falling, pouring, or turning now behave closer to how they would in the real world.

For marketers, this matters because:

Scenes feel grounded instead of floaty

Movement doesn’t immediately signal “AI-generated”

Simple product interactions look believable enough for paid feeds

Here's an example of a video created with Sora 2

Better Control When You Prompt Like a Creative Director

Sora 2 responds well to structured direction. When you specify camera language, pacing, and style, the model is more likely to deliver usable output.

You can reliably prompt for:

Shot types like push-ins, static wides, or handheld motion

Cinematic, realistic, or lightly stylized looks

Clear subject focus with minimal visual noise

This level of controllability makes it easier to generate clips that feel intentional rather than random, which is critical when content needs to sit inside a broader brand system.

Native Audio Helps Evaluate Ideas Faster

Having dialogue, ambience, and sound effects generated alongside visuals changes how quickly teams can judge a concept. Even if the final ad will use a different voiceover or sound mix, native audio makes it easier to assess pacing and emotional tone.

This reduces early-stage friction because you don’t need placeholder audio to test realism. Narrative clips are easier to evaluate in context.

Where Sora 2 Struggles for Marketers

Sora 2 is powerful, but its limitations become clear once you move beyond experimentation and into real marketing workflows. These gaps matter most when consistency, speed, and scale are non-negotiable.

Not Reliable Enough for Final Ad Output

Sora 2 can generate impressive clips, but consistency drops when you try to use it as a final production tool. Two generations from similar prompts can vary more than most marketing teams are comfortable with, especially when brand standards are tight.

This shows up in small but costly ways. Framing shifts slightly. Lighting changes between clips. Visual tone drifts just enough to trigger review feedback. For one-off content, this may be acceptable. For campaigns that need multiple variations, it creates friction.

As a result, Sora 2 works best upstream. When teams try to push its output directly into paid ads without a strong editing layer, quality control becomes the bottleneck.

Long-Form and Multi-Scene Control Is Still Fragile

While Sora 2 handles longer clips better than earlier models, it still struggles with narrative continuity across scenes. Characters, environments, and pacing can subtly drift when you try to extend a story beyond a single moment.

Common issues marketers run into include:

Characters changing appearance between scenes

Camera logic breaking across cuts

Emotional tone resetting mid-sequence

This limits its usefulness for structured ad formats like testimonials, walkthroughs, or story-led commercials where continuity matters more than visual novelty.

Brand Systems Have to Be Enforced Manually

Sora 2 does not understand brand rules. It doesn’t know your color palette, logo spacing, typography hierarchy, or pacing norms unless those are enforced after generation.

That means:

Brand consistency still depends on human review

Outputs need downstream editing to stay on-brand

Scaling variations increases review time, not reduces it

For marketers running high-volume campaigns, this becomes a real operational cost. The tool accelerates idea generation, but it does not replace the systems required to keep ads consistent across placements, regions, and audiences.

Sora 2 Pricing: What Marketers Should Budget For

Sora 2 pricing is usage-based and tied directly to video generation time. There are no bundled plans for marketing use cases. You pay for how many seconds of video you generate.

All Sora pricing is covered by OpenAI under the Sora Video API.

Sora Video API Pricing (Per Second)

There are 3 types of resolution and model tier:

→ Sora 2 (standard)

Resolution: 720×1280 (portrait) or 1280×720 (landscape)

Cost: $0.10 per second

→ Sora 2 Pro (standard resolution)

Resolution: 720×1280 or 1280×720

Cost: $0.30 per second

→ Sora 2 Pro (higher resolution)

Resolution: 1024×1792 (portrait) or 1792×1024 (landscape)

Cost: $0.50 per second

Because Sora charges per second, costs scale linearly with clip length. For a 10-second video, you can end up paying ~$1.00 (Sora 2) to ~$5.00 (Sora 2 Pro)

The pricing applies per generation, not per finished ad. If a clip takes multiple attempts to get right, each attempt is billed separately.

The per-second price looks reasonable in isolation, but real marketing usage adds friction:

Iteration multiplies the cost quickly

Multiple cuts are needed for different placements

Testing variations means regenerating video, not just editing

Sora pricing covers video generation only. It does not include editing, resizing, captioning, hook variations, or testing infrastructure. Once teams move from experimentation to volume, generation cost becomes only one part of the creative budget.

Sora 2 is affordable for single clips and exploration, but costs rise quickly when used for iterative ad production. For marketers, the real question isn’t just cost per second. They also have to consider how much usable ad creative one can reliably produce from that spend.

Turn Sora 2 Videos Into Ads That Perform

Sora 2 is strong at generating longer, more coherent videos. But like most AI video tools, it stops at generation.

For marketers, the real work starts after the clip exists: shaping it into an ad, testing variations, adapting it across placements, and scaling what works. Sora 2 doesn’t handle that layer. It produces video, not campaigns.

That’s where tools like Airpost come in.

Airpost turns AI-generated footage into structured, test-ready ads. Instead of prompting endlessly or managing edits manually, teams work from a living brief.

Creative strategists guide the system, and Airpost's proprietary ad taxonomy categorizes every creative by format, hook type, angle, and performance patterns so you always know what's working and why. New variations get generated automatically as winners emerge, ads are resized to every placement format, and a library of 300,000+ real footage clips fills gaps where AI-generated shots fall short.

Book a demo with Airpost to see how you can create winning ads at scale.