AI video ads are no longer the hard part. Most teams can generate clips quickly and produce variations without much friction. The challenge starts once volume increases and performance becomes harder to maintain.

This guide focuses on what happens after the first few tests. It looks at the practical decisions teams need to make when scaling AI video ads across campaigns, formats, and audiences. The goal is not just experimentation. It is a repeatable video output that brings in customers.

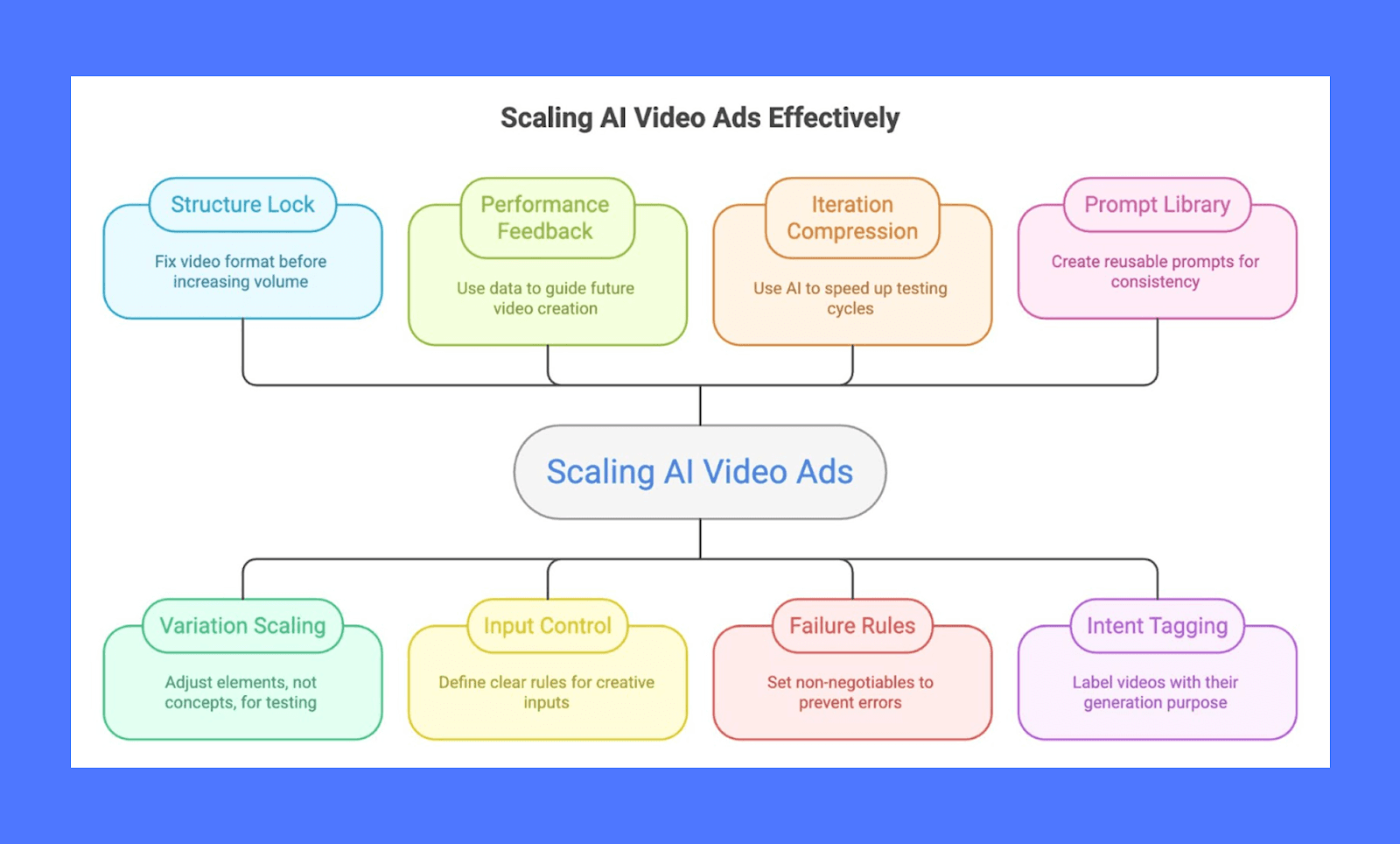

Below are the best practices teams use to scale AI video ads without losing clarity, learnings, or control as creative volume increases.

8 Best Practices to Scale AI Video Ads in 2026

Scaling AI video ads requires clear inputs, controlled testing, and a system that can handle higher creative volume. These best practices focus on how teams scale video output while keeping performance, learning, and quality intact.

1. Lock the structure before you scale volume

Before increasing output, teams need to decide what a “good” video looks like in concrete terms. That usually means fixing the basics early. Hook length, first frame style, subtitle placement, pacing, and where the CTA appears should not change every time a new video is generated.

When structure keeps shifting, performance data becomes hard to trust. A weak result could come from the idea, the pacing, or the framing, and there is no clear way to isolate the cause. Fixed structure removes that confusion.

Most teams start by choosing one or two proven formats and sticking to them for several weeks. The content inside the frame changes, but the frame itself stays familiar to both the platform and the audience. This approach also reduces review time, since fewer elements need approval. Scaling works more smoothly when the structure is treated as a rule, not a suggestion.

2. Give the algorithm more to work with, not less

Traditional testing says to keep the core idea intact and change one element at a time. That made sense when teams were doing the learning themselves, manually comparing results from small test sets.

Meta's Andromeda system (ad retrieval system that selects which ads to show to each user) works differently. It scores tens of thousands of ads per impression, looking for the right creative across different pockets of inventory. Near-identical variations limit where it can go. The algorithm learns faster with distinct creative, not subtle tweaks.

That changes how scaling should work. Instead of ten versions of the same concept with slightly different hooks, the goal is ten concepts that approach the product from fundamentally different angles:

Different storytelling structures

Different personas and audience segments

Different emotional entry points

Different value prop framing

Each creative should still connect to a clear audience and message. But variation happens at the concept level, not the caption level. You give Andromeda a wider range of inputs and let it find what performs.

This is one of the reasons platforms like Airpost focus on generating high volumes of genuinely diverse creative rather than endless iterations of the same ad. Instead of manually testing one variable at a time, you feed the system a wider range of concepts and let it do the optimization work for you.

3. Feed performance data back into the system

Scaling breaks down when past results are ignored. Winning videos contain clues that should guide the next batch.

Teams that scale well review performance weekly and pull out specifics. Which hooks kept people watching past three seconds? Which captions drove clicks? Which visuals caused a drop off? Those details are then reused intentionally.

This does not require advanced tooling. A shared doc or spreadsheet is enough if it is kept current. The key is consistency. Insights only help when they are applied repeatedly.

Over time, this creates momentum. New videos start closer to proven patterns instead of starting from zero. Performance stabilizes because fewer experiments are truly untested. Scaling becomes less risky when learning compounds instead of resetting.

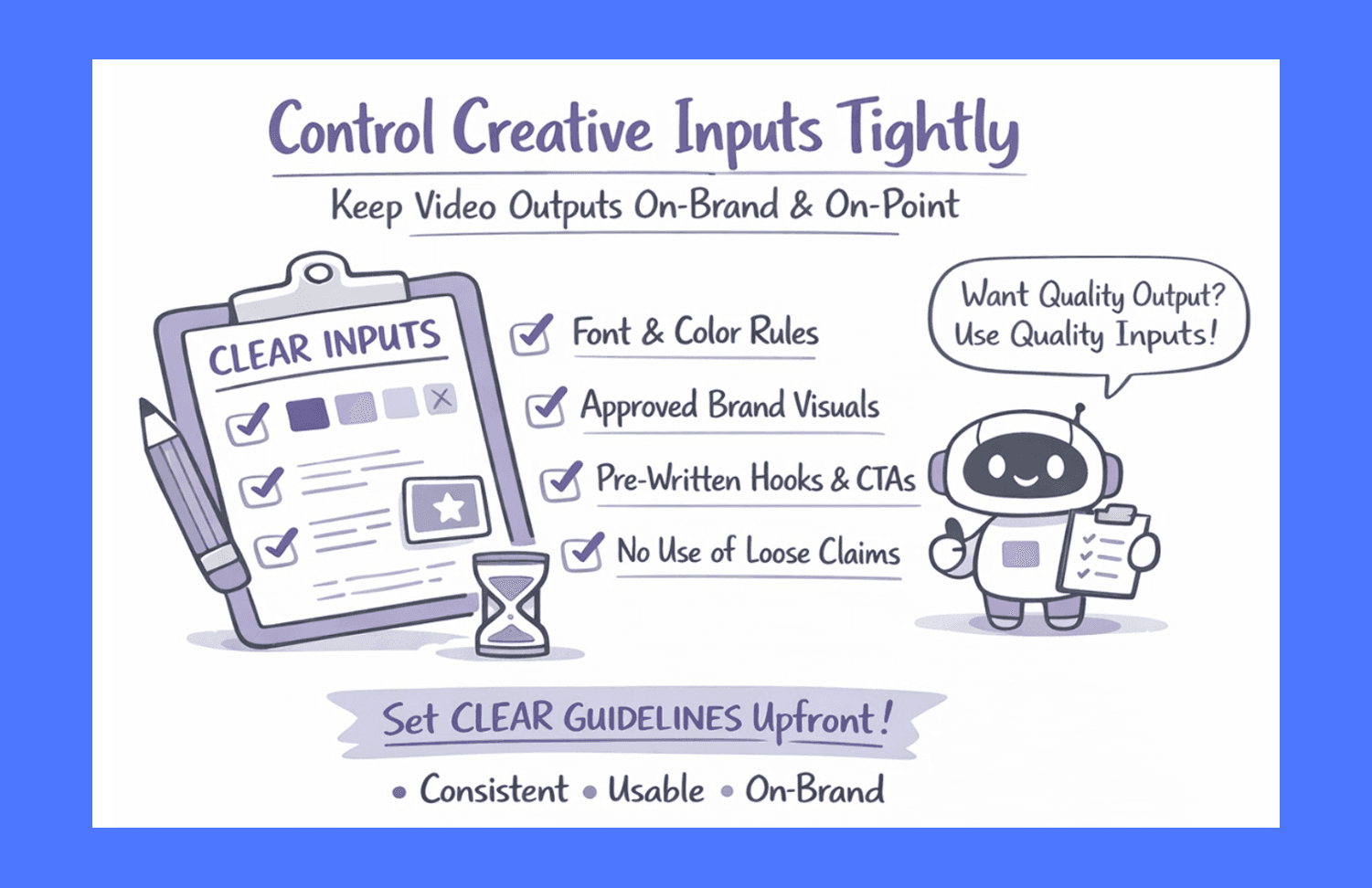

4. Control creative inputs tightly

Output quality depends heavily on what goes in. Loose inputs lead to inconsistent results and more cleanup work later.

Teams that scale smoothly usually define inputs early:

Font and color rules

Approved brand visuals

Clear copy blocks for hooks and CTAs

Do not use lists for claims that require proof

When inputs are clear, fewer videos get rejected, and review cycles shorten. This matters more at scale than during small tests.

Clear inputs also protect brand tone. Without them, volume increases tend to drift off brand in subtle ways. Fixing that drift later costs time and attention. Tight inputs upfront keep output usable and predictable, even as volume increases.

5. Use AI video ads to compress iteration cycles, not replace thinking

The real advantage of AI video ads is speed. What usually goes wrong is teams trying to hand over decision-making along with execution.

Strong teams still decide what they are testing. They decide the message, the promise, and the audience context. AI is used to shorten the time between idea and live ad, not to invent a strategy.

This shows up in how iterations are run. Instead of waiting a week for edits, teams test a change within hours. That feedback loop tightens. Decisions get sharper because they are based on fast evidence, not assumptions.

When AI video ads are treated as a shortcut to thinking, performance drops. When they are used to remove delays, performance compounds.

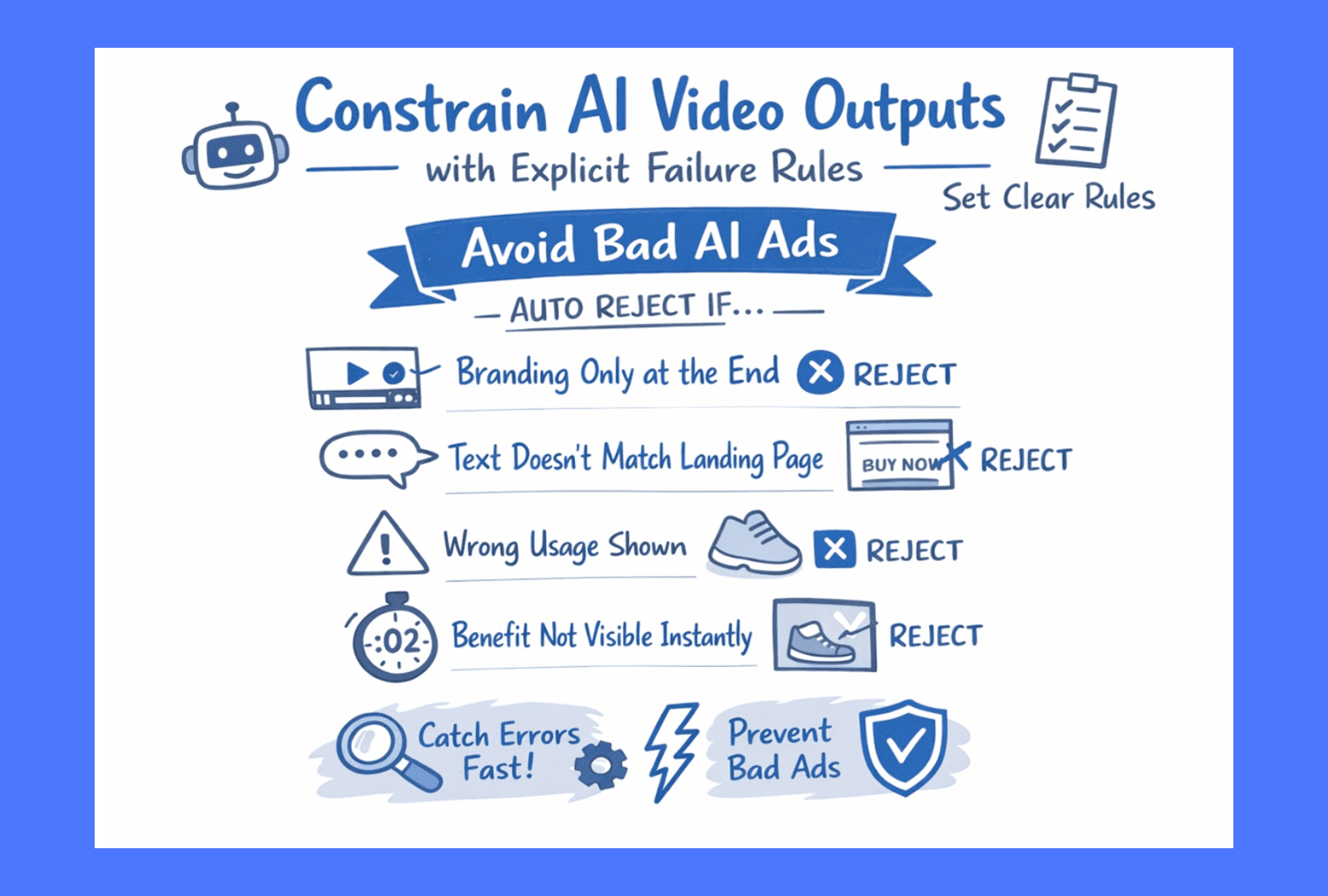

6. Constrain AI video outputs with explicit failure rules

AI video ads fail at scale when teams only define what they want, not what should never happen. At low volume, humans catch issues. At high volume, those issues slip through.

Teams that scale successfully write down clear failure rules before increasing output. These are non-negotiables that automatically disqualify a video from going live.

Examples of failure rules teams use:

If branding appears only at the end, it is rejected

If on-screen text contradicts the landing page, it is rejected

If the visual suggests a use case that the product does not support, it is rejected

If the product benefit is not visible in the first two seconds, the video is rejected

These rules matter specifically for AI video because generation happens faster than review. Clear failure rules reduce human review load and prevent subtle errors from multiplying across dozens of ads. Scaling becomes safer when rejection is automated, not debated.

7. Treat prompts as reusable production assets, not one-off inputs

When teams first use AI video tools, prompts tend to be written ad hoc. That works for experiments. It breaks at scale.

High-output teams treat prompts the same way they treat scripts or templates. They are written once, tested, refined, and reused. A good prompt is specific about structure, pacing, tone, and constraints, not just the idea.

Over time, teams usually end up with a small prompt library. One for testimonial-style videos. One for problem-solution formats. One for product demos. Each prompt includes instructions on what must appear, what must not appear, and how the video should open and close.

This matters because prompts shape consistency. Weak prompts create uneven output that needs heavy review. Strong prompts reduce rework and make scaling predictable. At volume, the prompt does more work than the tool itself.

8. Tag every AI video with its generation intent

AI video ads fail at scale when teams forget why a video was created in the first place. At high volume, everything starts to blur together, and results become hard to interpret.

Teams that scale well attach a simple intent tag to every AI-generated video before it goes live. This is not for the platform. It’s for internal learning.

Typical intent tags look like:

Hook test

Visual style test

Message clarity test

Offer framing test

When performance data comes back, the team knows what the video was trying to prove. That makes insights usable. A weak result does not mean “the ad failed.” It means a specific assumption failed.

This practice only becomes necessary with AI video ads because volume rises quickly. Without intent tags, learning collapses into noise. With them, scaling stays intelligible even as output increases.

Use Airpost to scale AI video ads without losing quality

Most teams hit a wall when they try to scale AI video ads using general-purpose tools. ChatGPT can help brainstorm. Basic video tools can generate clips. But once you need dozens of ads every week, across formats, angles, and audiences, those tools fall apart. There is no control layer. No creative review.

Airpost is built for that exact problem.

It combines multiple video generation models with a managed creative layer. Instead of prompting in isolation, teams work from a living brief that gets refined over time. Creative strategists review, shape, and guide production so output stays usable, brand safe, and performance-ready. Airpost also uses a proprietary ad taxonomy that categorizes every creative by format, hook type, angle, and performance patterns, so your team always knows what's working and why.

Here's what sets Airpost apart from standard AI video tools:

Proprietary ad taxonomy that tags creatives by format, hook type, angle, and performance data, giving you a structured view of what's driving results

Expert creative strategists who manage your briefs, refine scripts, and monitor ad performance so you're not reviewing raw AI output

A library of 300,000+ real footage clips combined with your own assets and AI-generated footage, so ads don't look generic or overly synthetic

Automatic resizing to vertical and square formats with repositioned text and optimized safe margins for every placement

Built-in brand safety and compliance, including a Disclaimers feature that ensures the right fine print appears in the right places

This setup matters for brands spending serious budgets on Meta, Instagram, and TikTok. They need creative velocity, but they also need consistency. Airpost is designed for teams that want to increase volume without turning ads into guesswork or sacrificing brand integrity.

Get a demo to see how Airpost helps teams produce and scale AI video ads.